The game Wordle has a lot of speculation online about what is the "best" first word. If we are exploring optimal strategies to solve the original game in the least number of guesses, most of it is wrong.

For humans, almost all of these words are great! However for optimal strategies, we need to examine all of the guesses, not just the first word. It turns out, it's possible to solve 99% of all puzzles in only 4 guesses or with an average of ~3.42 guesses per win, but not with most of the "best" words found online.

Try out my solver with the best strategies that have been found so far.

It's important to note that Wordle has a specific set of "target" words (that could be the daily word in a puzzle), and a much larger set of "guessable" words (that you can use to guess, but will never be the daily word). All of this is specific to the exact word lists that Wordle currently uses.

Decision Trees and Metrics

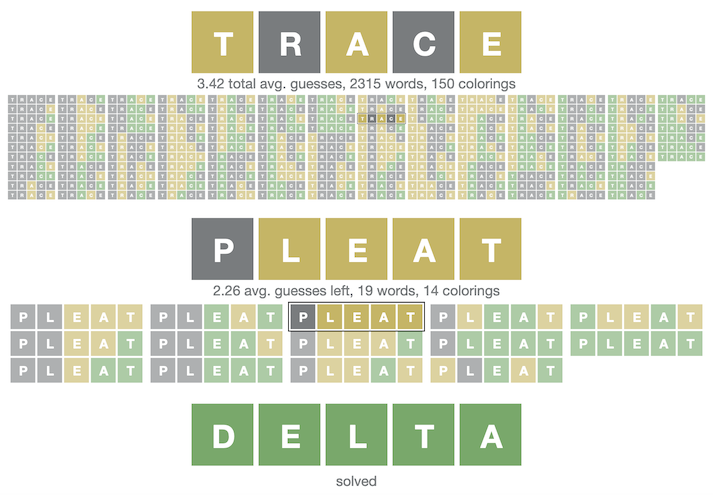

Each (non-stochastic) strategy can be represented by a decision tree, where there is a single initial guess, and each possible resulting coloring (grey/yellow/green) in the game will also be paired with a second guess, and so on. The entire game is mapped out. My best "strategies" so far are hopefully a good example.

For whatever metric we are judging strategies by, there will be one or more "best" strategies. There are a few metrics that I've used. After seeing most words solvable in less than 5 guesses, my initial metric was "words that can be solved in 4 or fewer guesses", with a goal of all words being solvable that way. I have not run into this case (18 words short!!), and I'm in progress computationally verifying that this is not possible (the heuristically best third of all words have been exhaustively checked). I've switched to minimizing the metric "average guesses per win".

Deep vs. Shallow Solutions

Most of the "best" words discussed online have been computed by examining the effects/eliminations/entropy of just the first guess, however this can actually result in non-optimal strategies!

For any list of target and a guessable word, we can partition the target words into distinct sets by determining what colors they would get for a given guess. For example, if our guess is TRACE, we will have one set of words where the first letter is gray and the rest are green (BRACE, GRACE). We tend to gain a lot of information when most of these sets are small, and I've based search heuristics off of a combination of the size of the largest set and the average size of sets.

This lets us see what guesses give us the most information at that stage, however, the best guess at this stage may result in some sets that are more difficult to solve.

This is solvable by recursively exploring the space of all possible strategies, to see which ones have the highest values for a given metric. Using the heuristics above (based on how the partitioned sets are sized), tree searches have found more optimized strategies with different starting words.

Results

The best strategy I've found for the metric of "most 4-guess words" starts with RANCE (99.22%, missing just 18 words), with RANTS/RATED/RONTE/ALTER close by. The best for "fewest average guesses" is currently SALET (3.42117), with REAST/CRATE/TRACE/SLATE close by.

All of these examples are available at https://jonathanolson.net/wordle-solver/.

Code and Computations

The code I've used to run these searches is available. It's in a rough state right now, however I'll likely refine it in the upcoming weeks. The tree search logic has two types of nodes, since we need one to store different guesses for the same set of words (ComputationNode), and one that has a map of all possible scores given a guess (GuessNode). I'm able to compute and serialize these trees (currently at 6GB of data on disk), and then efficiently compute optimized strategies given different metrics (createTree).

I've used scanning for 4-guess strategies, using a separate optimized section of code that isn't constructing a persistent tree. It doesn't look possible to have a strategy that can always solve Wordle puzzles in only 4 guesses every time.